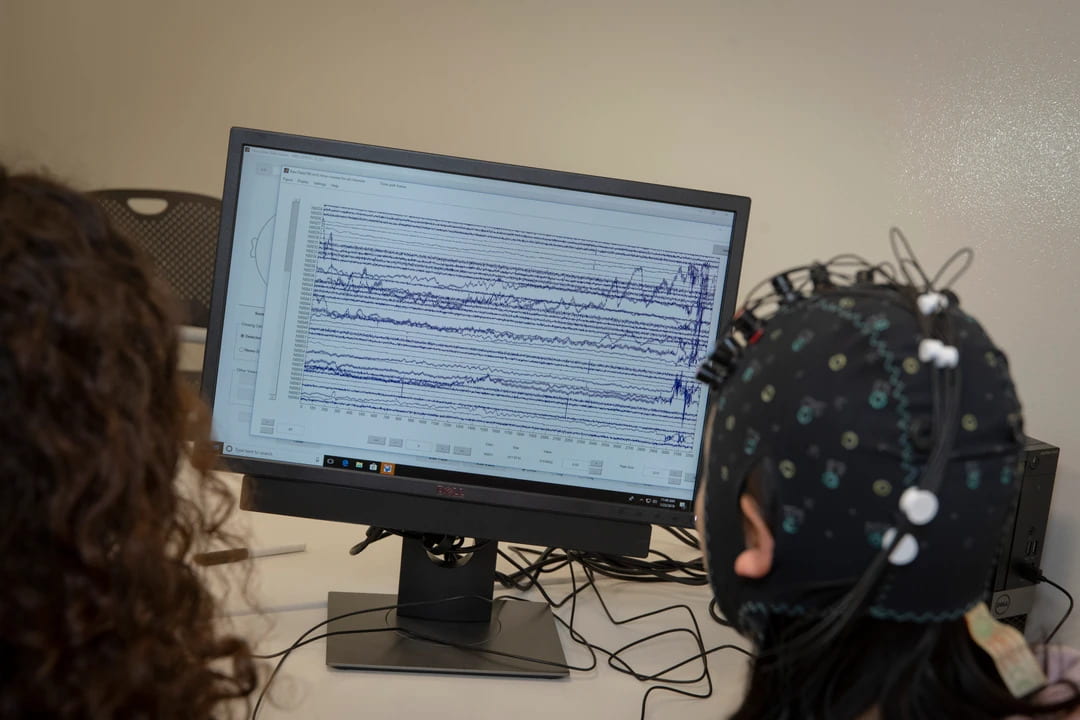

Could a computer detect a person’s emotions? Could it tell when someone is frustrated over something like a tricky math problem on an online tutoring program? Could it detect deep reflection on a problem?

This project, in collaboration with Erin Walker at Arizona State University and Kate Arrington at Lehigh University, is part of NSF’s support of Understanding the Brain and the BRAIN Initiative, a coordinated research effort that seeks to accelerate the development of new neurotechnologies. We will explore the use of measurements of brain activity from lightweight brain sensors alongside student log data to understand important mental activities during learning. It also will allow us to explore novel human-computer interaction paradigms for utilizing sensors that provide passive, continuous, implicit, but noisy input to interactive systems. This has implications for the growing fields of brain-computer interfaces, wearable computing, physiological computing, and ubiquitous computing.

Positions

We are looking for motivated undergraduate and graduate research assistants for this project. We have both paid and volunteer positions, or students can enroll in an independent study for credit. Work will involve experiment design, running human subjects experiments, as well as data analysis and machine learning on multidimensional time series data.