MER Lab conducts research on robotic manipulation strategies and their applications. We integrate computer vision, control theory, and machine learning techniques to design skillful and robust robots.

One of the major goals of the MER Lab is to identify environmental problems (e.g., recycling, waste sorting) that robots can alleviate, and to develop solutions for the missing manipulation capabilities. MER Lab also focuses on benchmarking efforts for robotic manipulation, dexterous manipulation, visual servoing, soft robot control, and active vision.

For any questions, please e-mail bcalli@wpi.edu.

NEWS

Leveraging Human-inspired Dexterous Picking Skills for Complex Multi-Object Scenes

December 2024

We developed a new methodology to apply human-inspired picking skills to pick objects in cluttered scenes. We designed a system that can decide which skills to use for each object and how to use them based on the contextual understanding of the scene.

Learn more from the following paper:

Leveraging Dexterous Picking Skills for Complex Multi-Object Scenes

Anagha Rajendra Dangle, Mihir Pradeep Deshmukh, Denny Boby, Berk Calli

2024 IEEE-RAS 23rd International Conference on Humanoid Robots (Humanoids)

[Paper]

SOFT ROBOT CONTROL VIA VISUAL SERVOING: ENABLING SHAPE CONTROL

October 2024

Our lab developed a vision-based shape control algorithms for soft robots that does not require robot models or a reference image. Currently on 2D and we are working on the 3D extension. Learn more from the following paper.

Shape Control of Variable Length Continuum Robots Using Clothoid-Based Visual Servoing

Abhinav Gandhi, Shou-Shan Chiang, Cagdas D Onal, Berk Calli

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2023

[Paper]

Older Announcements

CONTROL YOUR ROBOTS WITH VISION WITHOUT MARKERS

January 2023

We developed a set of algorithms that enable vision-based full-body robot control without markers. Learn more from the videos below:

NEW ROBOTICS TECHNOLOGIES FOR THE SHIPBREAKING INDUSTRY

October 2023

Our lab has been working on key robot capabilities to enable robotic shipbreaking. Learn more from the following papers:

Vision-based Oxy-fuel Torch Control for Robotic Metal Cutting

James Akl, Yash Patil, Chinmay Todankar, Berk Calli

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2023

[Paper]

Feature-driven Next View Planning for Cutting Path Generation in Robotic Metal Scrap Recycling

J. Akl, F. Alladkani, B. Calli

IEEE Transactions on Automation Science and Engineering, 2023

[Paper]

Cut Sequencing Algorithm for Safely Disassembling Large Structures

James Akl, Sumanth Pericherla, Berk Calli

IEEE Conference on Decision and Control (CDC), 2023

[Paper]

CNN-Based Task State Estimation for Safer Automation of Oxy-Fuel Metal Cutting

James Akl, Shreedhar Kodate, Berk Calli

IEEE 19th International Conference on Automation Science and Engineering (CASE), 2023

[Paper]

OUr Undergraduates implemented a Waste Sorting Robot for recyclinG

May, 2022

Find more here: https://www.wpi.edu/news/wpi-students-develop-robotic-technology-help-workers-sort-trash-recycling-centers

[More info about our robotic waste recycling research]

First Public Dataset From a Recycling Plant

April, 2022

‘Our paper “ZeroWaste Dataset: Towards Deformable Object Segmentation in Cluttered Scenes” has been accepted to CVPR 2022! We introduce the first publicly available dataset for waste classification that is collected from an operating materials recovery facility (MRF). We also present baseline classification results with the state of the art algorithms. This work will enable researchers to develop vision and robotic systems for recycling applications. Thank you Boston University and Washington University for the excellent collaboration!

[More info about our robotic waste recycling research]

Human Robot Partnership for Ship Breaking

2021

In collaboration with European Metal Recycling company, we are developing a metal scrap cutting robot, to break down large metal structures into small recyclable pieces. You can learn more from our paper “Towards Robotic Metal Scrap Cutting: A Novel Workflow and Pipeline for Cutting Path Generation” has been published in the proceedings of CASE 2021.

[More info about our metal scrap recycling research]

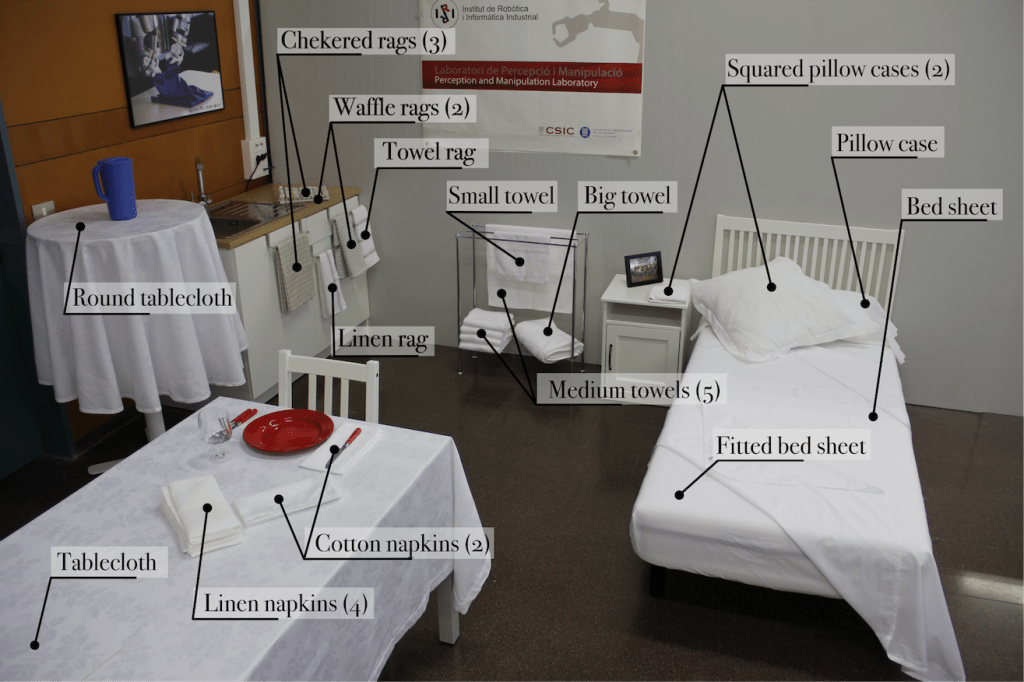

Towards a cloth object set

Our paper “Household Cloth Object Set: Fostering Benchmarking in Deformable Object Manipulation” has been accepted to RA-Letters! In this paper we discuss how to form a set of cloth objects for developing benchmarking protocols to be used in cloth manipulation research. Thanks to Institut de Robòtica i Informàtica Industria for leading this effort and to UMass Lowell for the great collaboration.

[More Info about our benchmarking for manipulation research]